Introduction

Recent advances in natural language processing (NLP) have led to the development of powerful language models such as the GPT (Generative Pre-trained Transformer)[1, 2] series, including large language models (LLM) such as ChatGPT and GPT-4 [3]. These models which are pre-trained on vast amounts of text data have demonstrated exceptional performance in a wide range of real-world applications, such as language translation, text summarization, and question-answering. In particular, human-machine interactions have been significantly improved through the ChatGPT model, making it an integral part of our daily lives.

In contrast to traditional base LLMs that are solely trained on text, ChatGPT allows for instruction fine-tuning of a pre-trained language model based on Reinforcement Learning from Human Feedback (RLHF), making it a state-of-the-art framework for reasoning and generalized text generation by leveraging human feedback.

Thus, one of the critical elements in establishing an impactful interaction with an LLM, like ChatGPT, is prompt engineering. In essence, prompt engineering is the art of crafting input prompts to guide an LLM’s responses in a more specific, relevant, and coherent manner. The choice of prompts can significantly impact the quality and direction of the chatbot’s response, making it an essential skill for developers looking to optimize chatbot interactions.

This blog-post aims to highlight the importance of prompt engineering and how it can be leveraged to optimize interactions with LLMs, especially ChatGPT. Additionally, this blog-post discusses various strategies in crafting effective prompts, and showcases the use of the OpenAI python library to conveniently access the OpenAI API from applications written in the Python language.

Understanding Prompt Engineering

Prompt engineering is an increasingly important skill set needed to converse effectively with LLMs, such as ChatGPT. A prompt is a set of instructions provided to an LLM that programs it by customizing and/or enhancing or refining its capabilities [5]. A prompt can influence subsequent interactions with an LLM by providing specific rules and guidelines for an LLM conversation with a set of initial rules. In particular, a prompt sets the context for the conversation and tells the LLM what information is important and what the desired output form and content should be. By introducing specific guidelines, prompts facilitate more structured and nuanced outputs to aid a large variety of tasks in the context of LLMs.

Thus, prompt engineering, at its core, is a practice that enables us to effectively control and guide LLM responses [4]. By crafting precise and meaningful prompts, developers can channel the output of an LLM in a manner that is coherent, relevant, and aligned with the intended purpose of the interaction.

One of the key distinctions in prompt engineering is between static and dynamic prompts. Static prompts are fixed and pre-defined inputs that are used to initiate a conversation with an LLM. For instance, a customer support chatbot may start with a static prompt like “How can I help you today?”. Dynamic prompts, on the other hand, are generated on-the-fly based on the ongoing conversation and context. They allow the LLM to adapt and respond to the specific needs and queries of the developer in real-time. Dynamic prompts are particularly useful in situations where the conversation can take multiple directions, and a one-size-fits-all approach may not be suitable.

Effective Prompting Techniques

Crafting effective dynamic prompts is key to optimizing LLM responses. By understanding the relationship between prompt characteristics and an LLM output, developers can fine-tune their prompts to achieve desired responses. Some of the essential techniques to enhance prompt effectiveness include:

Using Context-rich Prompts

Generating consistent and coherent human-like dialogue is a core goal of natural language research [6]. One of the most effective ways to guide LLM responses is by using context-rich prompts that provide specific instructions and information. By clearly conveying the context and desired outcome, developers can steer the chatbot towards generating more relevant and targeted responses. For instance, instead of using a vague prompt like “Tell me about AI,” a more context-rich prompt could be “Explain the applications of AI in healthcare.”

Leveraging Prompt Length and Specificity

Additionally, prompt length and specificity play a crucial role in influencing the length and precision of chatbot responses. Longer, more specific prompts tend to generate longer and more precise responses. For example, a prompt like “Explain the role of machine learning in financial analysis” is likely to yield a more detailed and focused response compared to a shorter, less specific prompt like “Explain machine learning.” By leveraging prompt length and specificity, developers can fine-tune the granularity and focus of LLM responses.

Tuning hyper-parameters

Specific to ChatGPT, besides prompt characteristics, developers can also control its responses by tuning hyper-parameters such as :

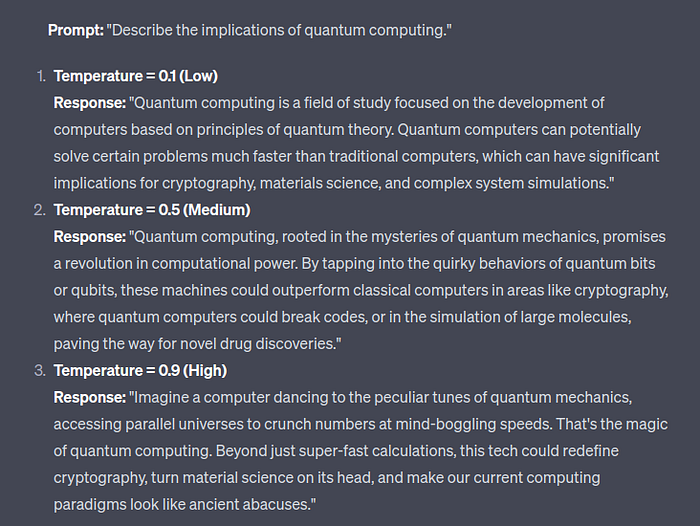

Temperature

Temperature controls the randomness of ChatGPT’s output allowing the developer to influence the creativity and exploration of the model. While a higher temperature value, such as 1.0, leads to more randomness and diversity in the generated text, a lower temperature value, like 0.2, produces more focused and deterministic responses.

In the examples shown in Fig.1, as the temperature increases, the responses become more varied and creative. On the other hand, at a very low temperature value, such as 0.1, the output is more deterministic and might often revert to more generic or commonly used phrases. At higher temperatures, the risk of generating nonsensical or overly creative responses increases, but it can also lead to unique and interesting outputs.

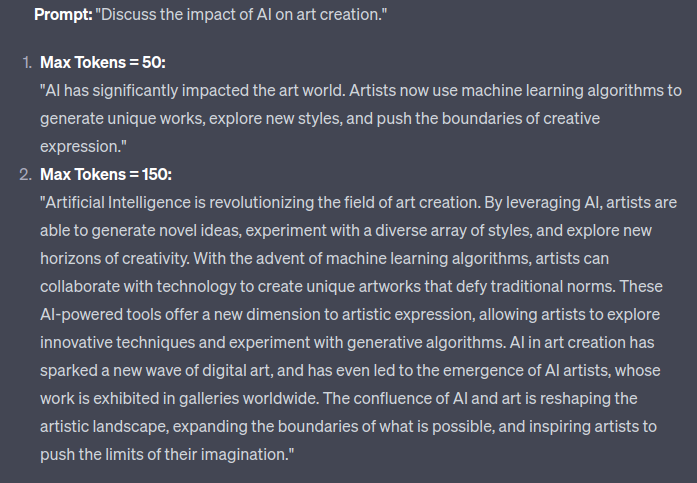

Max tokens

In the context of NLP, tokens are defined as the individual units of text, either words or characters. Thus, by adjusting max tokens, developers are able to set an upper limit on the length of the ChatGPT’s response, which can be useful for avoiding overly verbose responses.

For example, in Fig.2, by increasing the max tokens value from 50 to 100, ChatGPT generates a more detailed response on the topic of “Discuss the impact of AI on art creation”.

Top P

Also known as nucleus sampling or probabilistic sampling, this hyper-parameter allows the developer to control the diversity of ChatGPT’s output. Top P determines the probability of sampling the next token in the generated response. As shown in Fig. 3, a higher top P value, for example, 0.9, allows more choices to be considered while sampling, resulting in more diverse responses. Conversely, a lower top P value, like 0.2, limits the choices and generates more focused responses.

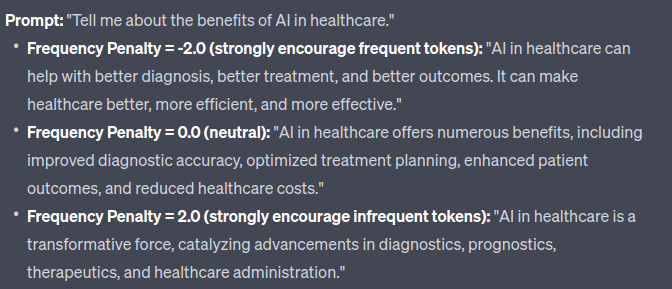

Frequency Penalty

A number between -2.0 and 2.0, frequency penalty is the hyper-parameter that controls the repetition of words or phrases in the generated output. By setting a higher frequency penalty value, developers can penalize ChatGPT for repeating the same words or phrases excessively. A positive penalty encourages the LLM to use less frequent tokens, while a negative penalty encourages the LLM to use more frequent tokens. This helps in generating more diverse and varied responses.

In Fig.4, we can see that as the “frequency penalty” value increases, the responses use less frequent tokens and become more complex and nuanced. Adjusting the “frequency penalty” parameter allows developers to control the complexity of ChatGPT’s responses, making them more suitable for specific use cases and contexts.

In conclusion, by employing context-rich prompts, leveraging prompt length and specificity, and tuning the aforementioned hyper-parameters, developers can effectively shape ChatGPT’s responses to meet specific needs.

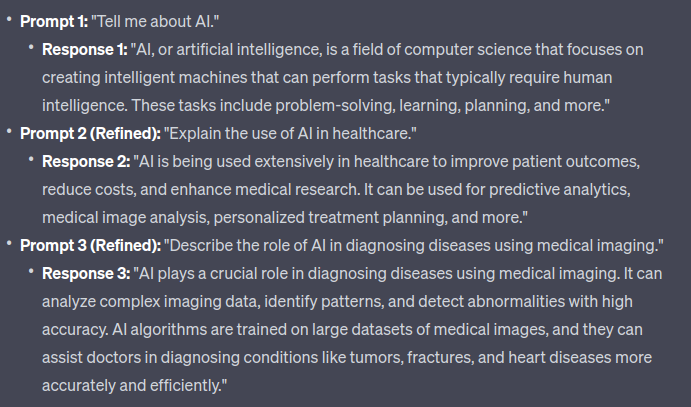

Iterative Prompt Design

Prompt engineering is an iterative process that involves continuous refinement and testing to achieve optimal LLM performance. In many cases, the initial prompts may not produce the desired outcomes, and thus, prompt engineering becomes an iterative process of fine-tuning and evaluation.

A systematic way to improve upon the LLM performance is iteratively refining the prompts in the form of adjusting the context, specificity, or wording of the prompts or even iteratively tuning the hyper-parameters. Testing the revised prompts helps developers assess their effectiveness and identify areas for further improvement.

In the example shown in Fig.5, the initial prompt was broad and resulted in a general response about AI. As the prompt was iteratively refined, the responses became more specific and relevant to the topic of interest — “AI in healthcare and medical imaging”. The iterative process allowed the developer to achieve responses that were more aligned with the intended objective.

Prompt Engineering with Python

OpenAI has provided the developer community with a python library that enables its users to interact with and implement various machine learning and artificial intelligence models and functionalities offered by OpenAI. This library provides a simple interface to OpenAI’s flagship models, such as the GPT series, for a wide range of applications, from text generation and sentiment analysis to translation and summarization.

Installation

The installation is a straight-forward process and can be installed by running the following command:

pip3 install --upgrade openaiSetting up the environment

After the installation step, the developer will need to configure the library using their account’s secret key, available on website. For usage, one can either of the following options:

Option 1: Setting the key as an environment variable

export OPENAI_API_KEY='sk-...'and then setting the api_key parameter as:

import openai

import os

from dotenv import load_dotenv, find_dotenv

_ = load_dotenv(find_dotenv()) # read local .env file

openai.api_key = os.getenv('OPENAI_API_KEY')Option 2: Directly setting the api_key parameter to its value within code

import openai

openai.api_key = "sk-..."Creating an endpoint

Once the API key is set, the developer will be able to get API responses from their OpenAI model of choice using an input query. A clean way to do this would be to create a helper function that makes it easier to dynamically generate outputs using various prompts. Consider the following example:

def get_completion(prompt, model="gpt-3.5-turbo", temp=0.0, max_tkn=100, p=0.5, freq=1.0):

messages = [{"role": "user", "content": prompt}]

response = openai.ChatCompletion.create(

model=model,

messages=messages,

temperature=temp,

max_tokens=max_tkn,

top_p=p,

frequency_penalty=freq

)

return response.choices[0].message["content"]In the above code snippet, the helper function get_completion takes in a prompt and returns the completion for that prompt all the while allowing the developer to choose their choice of hyper-parameters such as temperature, max tokens, top P and frequency penalty. This allows the developer to provide precise instructions to the LLM, directing the model toward the intended output and minimizing the chances of irrelevant or inaccurate responses. For all the code demonstrations in this blog-post, we will use OpenAI's gpt-3.5-turbo model and the chat completions API.

Working examples

Let’s look at how the helper function get_completion can be used to perform different tasks:

Summarizing

The use of delimiters, such as ```, “””, < >, <tag> </tag>, :, can clearly indicate distinct part of the input prompt as shown in the code snippet below:

text = f"""

You should express what you want a model to do by \

providing instructions that are as clear and \

specific as you can possibly make them. \

This will guide the model towards the desired output, \

and reduce the chances of receiving irrelevant \

or incorrect responses. Don't confuse writing a \

clear prompt with writing a short prompt. \

In many cases, longer prompts provide more clarity \

and context for the model, which can lead to \

more detailed and relevant outputs.

"""

prompt = f"""

Summarize the text delimited by triple backticks \

into a single sentence.

```{text}```

"""

response = get_completion(prompt, max_tkn=500)

print(response)The output:

To guide a model towards the desired output and reduce irrelevant or incorrect responses, it is

important to provide clear and specific instructions, which can be achieved through longer prompts

that offer more clarity and context.“Few-shot” prompting

Few-shot prompting refers to the technique where an LLM is given a small number of examples (or “shots”) to infer or generalize a particular task or concept. Instead of requiring extensive fine-tuning or large datasets, few-shot prompting leverages the vast knowledge and generalization abilities of pre-trained models. By providing a handful of examples within the prompt, developers can guide the model to produce desired outputs for tasks it hasn’t been explicitly trained on. This technique showcases the model’s adaptability and capability to handle diverse tasks with minimal instruction.

prompt = f"""

Your task is to answer in a consistent style.

<child>: Teach me about patience.

<grandparent>: The river that carves the deepest \

valley flows from a modest spring; the \

grandest symphony originates from a single note; \

the most intricate tapestry begins with a solitary thread.

<child>: Teach me about resilience.

"""

response = get_completion(prompt)

print(response)The output:

<grandparent>: Resilience is like a mighty oak tree that withstands the strongest storms,

bending but never breaking. It is the unwavering determination to rise again after every fall,

and the ability to find strength in adversity. Just as a diamond is formed under immense pressure,

resilience allows us to shine even in the face of challenges.In the code snippet above, as the model is provided with few-shot examples of the conversation, it responds in a similar tone to the next instruction.

Asking for a structured output

In the code snippet below, the model is asked to generate the output in the JSON format as:

prompt = f"""

Generate a list of three made-up book titles along \

with their authors and genres.

Provide them in JSON format with the following keys:

book_id, title, author, genre.

"""

response = get_completion(prompt, max_tkn=500)

print(response)The output:

{

"books": [

{

"book_id": 1,

"title": "The Enigma of Elysium",

"author": "Evelyn Sinclair",

"genre": "Mystery"

},

{

"book_id": 2,

"title": "Whispers in the Wind",

"author": "Nathaniel Blackwood",

"genre": "Fantasy"

},

{

"book_id": 3,

"title": "Till Death Do Us Part",

"author": "Isabella Rose",

"genre": "Romance"

}

]

}For more detailed use, developers are suggested to check out OpenAI Cookbook — a culmination of example code for accomplishing common tasks with the OpenAI API.

Conclusion

In conclusion, prompt engineering is an indispensable skill for developers looking to harness the full potential of AI chatbots like ChatGPT. It’s an art that involves carefully crafting prompts, refining them iteratively, and using advanced techniques to shape the responses to specific needs. With the right approach, developers can achieve remarkable improvements in LLM performance, turning them into highly effective tools for various applications.

References

[1] Improving language understanding with unsupervised learning

[2] Better language models and their implications

[4] A Prompt Pattern Catalog to Enhance Prompt Engineering with ChatGPT

[6] Don’t Say That! Making Inconsistent Dialogue Unlikely with Unlikelihood Training

Written by: Sertis Vision Lab

Originally published at https://www.sertiscorp.com/sertis-vision-lab