Have you ever felt tired of writing every single code from scratch and wished there would be a tool to save your time? Try oneML!

All the software developers have, at least once, experienced the challenge of effectively leveraging and deploying machine learning due to complex infrastructure and a high variety of models and frameworks to select. It also requires high computational power, heterogeneous production environments, and various programming languages.

oneML was developed specifically to tackle that particular problem. As a fully-fledged C++ SDK with an API for a variety of applications, It can be deployed on any hardware and operating system. As It is made of several task-specific macro modules, oneML achieves the most efficient SDK performance and is easy to extend by simply adding a new module.

In this article, we would like to demonstrate the high performance and usability of oneML. Let’s try it yourself with this introduction and sample apps to showcase oneML functionalities and possible use cases: face detection, face identification, face embedding, and vehicle detection. Simply download oneML and follow the instructions in this article.

Unfold the power of high performance and usability with oneML, another promising product that will make developers’ life easier. No need to waste time and resources by reinventing the wheel, instead, create something awesome on it!

What is oneML?

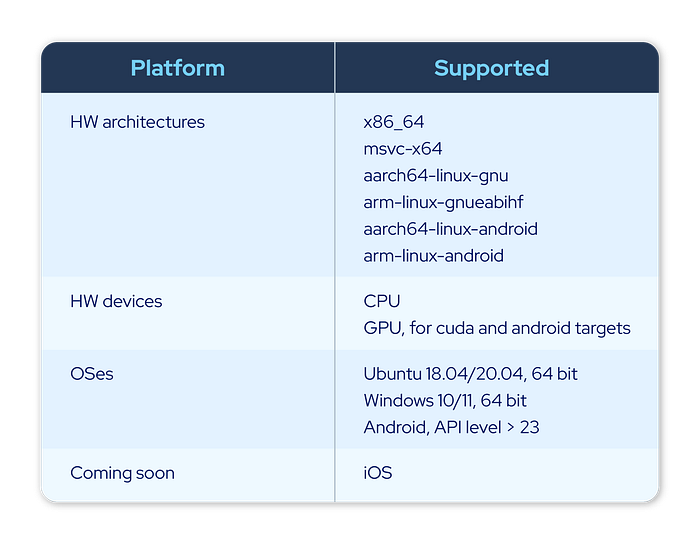

oneML is a fully-fledged C++ software development kit (SDK) providing APIs for a number of different AI/ML applications aiming to achieve the most efficient performance and making the SDK easy to extend by simply adding a new module. Potentially, it can be deployed on any target including CPU, GPU, and CUDA, and also any platform such as Android, iOS, embedded Linux, Linux, and Windows. Moreover, the oneML library provides API bindings in other programming languages such as Java, Python, C#, and Golang. Also, oneML is automatically built and tested every time something changes in the codebase.

Getting Started

This section describes steps for quickly testing oneML library. We will download oneML library and models. Then, we build and run sample C++ applications on x86_64 architecture. There are 2 separate guidelines for Linux and Windows users.

NOTE: For other languages and architectures, please refer to Setup and Build section.

For Linux users

Step 1: Build a docker image

We recommend setting up an environment for oneML with a docker container. A docker image can be built from Dockerfile in the docker folder. Here, we are going to build a base image and another image for CPU runtime. Run the below command to build the image.

docker build -t oneml-bootcamp:cpu-base -f docker/Dockerfile --build-arg base_image=ubuntu:20.04 .

docker build -t oneml-bootcamp:cpu -f docker/Dockerfile.cpu --build-arg base_image=oneml-bootcamp:cpu-base .Step 2: Build sample applications

We will run a docker container with oneml-bootcamp:cpu image that we built in the previous step, and mount this repository folder to the container at /workspace path. We execute bash inside the container to run the next following steps.

docker run -it --name oneml-bootcamp -v $PWD:/workspace oneml-bootcamp:cpu /bin/bash -c "cd /workspace && /bin/bash"Then, inside the container, we download oneML library and models, and build sample C++ applications with build.sh script.

./build.sh -t x86_64 -cc --cleanThe oneML library and models are downloaded to assets/binaries/x86_64.

assets/binaries/x86_64

├── bindings

├── config.yaml

├── include

├── lib

├── oneml-bootcamp-x86_64.tar.gz

├── README.md

└── shareThe compiled C++ applications are in the bin folder.

bin

├── face_detector

├── face_embedder

├── face_id

├── face_verification

└── vehicle_detectorStep 3: Run sample applications

We will run face detection and vehicle detection applications. Our sample applications run oneML models with images in assets/images folder. Change the directory to the bin folder.

cd binTo run the face detector application, simply run this command.

$ ./face_detector

Faces: 1

Face 0

Score: 0.997635

Pose: Front

BBox: [(43.740112, 85.169624), (170.153168, 173.094971)]

Landmark 0: (109.936066, 95.690453)

Landmark 1: (149.844360, 94.360649)

Landmark 2: (130.055618, 117.397400)

Landmark 3: (112.128708, 137.498520)

Landmark 4: (146.398651, 136.513870)To run the vehicle detection application, simply run this command.

$ ./vehicle_detector

Vehicles: 8

Vehicle 0

Score: 0.907127

BBox[top=302.584076,left=404.385437,bottom=486.317841,right=599.864502]

Vehicle 1

Score: 0.899688

BBox[top=301.987000,left=651.770996,bottom=432.352997,right=920.845947]

Vehicle 2

Score: 0.875428

BBox[top=314.573608,left=143.590546,bottom=445.937286,right=367.556488]

Vehicle 3

Score: 0.873904

BBox[top=237.489685,left=100.689484,bottom=303.421906,right=279.191864]

Vehicle 4

Score: 0.842179

BBox[top=243.125473,left=328.062103,bottom=307.927338,right=473.017853]

Vehicle 5

Score: 0.822146

BBox[top=238.680069,left=563.760925,bottom=308.430756,right=705.746033]

Vehicle 6

Score: 0.653955

BBox[top=215.012100,left=477.994904,bottom=252.589249,right=547.705505]

Vehicle 7

Score: 0.620528

BBox[top=213.333237,left=641.528503,bottom=249.301697,right=742.615601]For Windows users

Step 0: Install required tools

To build oneML C++ applications on Windows, we need to install these tools as follows:

- Microsoft Visual Studio 16 2019 or newer

- CMake 3.17 or newer

Step 1: Build sample applications

We will download oneML library and models, and build sample C++ applications with build.bat script.

.\build.bat -t msvc-x64 -cc --cleanThe oneML library and models are downloaded to assets\binaries\msvc-x64.

assets\binaries\msvc-x64

├── bindings

├── config.yaml

├── include

├── lib

├── oneml-bootcamp-msvc-x64.tar.gz

├── README.md

└── shareThe compiled C++ applications are in bin\Release folder.

bin\Release

├── face_detector.exe

├── face_embedder.exe

├── face_id.exe

├── face_verification.exe

└── vehicle_detector.exeStep 2: Run sample applications

We will run face detection and vehicle detection applications. Our sample applications run oneML models with images in assets\images folder. Change the directory to bin\Release folder.

cd bin\ReleaseTo run the face detection application, simply run this command.

.\face_detector.exeResult

Faces: 1

Face 0

Score: 0.997635

Pose: Front

BBox: [(43.740120, 85.169617), (170.153168, 173.094971)]

Landmark 0: (109.936081, 95.690453)

Landmark 1: (149.844376, 94.360649)

Landmark 2: (130.055634, 117.397392)

Landmark 3: (112.128716, 137.498520)

Landmark 4: (146.398636, 136.513870)To run the vehicle detection application, simply run this command.

.\vehicle_detector.exeOutput

Vehicles: 8

Vehicle 0

Score: 0.907128

BBox[top=302.583893,left=404.385590,bottom=486.317871,right=599.864380]

Vehicle 1

Score: 0.899688

BBox[top=301.986755,left=651.771118,bottom=432.352966,right=920.846069]

Vehicle 2

Score: 0.875427

BBox[top=314.573425,left=143.590775,bottom=445.937408,right=367.556946]

Vehicle 3

Score: 0.873901

BBox[top=237.489792,left=100.689354,bottom=303.421936,right=279.191772]

Vehicle 4

Score: 0.842178

BBox[top=243.125641,left=328.062012,bottom=307.927002,right=473.017883]

Vehicle 5

Score: 0.822146

BBox[top=238.680267,left=563.760864,bottom=308.430756,right=705.746338]

Vehicle 6

Score: 0.653957

BBox[top=215.012009,left=477.994873,bottom=252.589355,right=547.705811]

Vehicle 7

Score: 0.620529

BBox[top=213.333160,left=641.528442,bottom=249.301712,right=742.615662]Setup and Build

Depending on your OS and on which programming language you would like to use, the setup and build process is going to be slightly different. Please refer to the relevant section below.

Linux

On Linux machines, it is always advised to use docker to create a reproducible workspace and not have to worry about dependencies. If you don’t want to use docker, feel free to set up your local environment to match the one provided in our Dockerfiles.

Docker build

There are multiple Dockerfiles available based on the HW to be targeted for the deployment as well as if the device requires specific build tools or dependencies.

There are two different types of docker images:

- base docker images are supposed to provide all the common tools and libraries shared by all the other, more specific, images built on top of them

- device/OS-specific, e.g. cpu, gpu, android

For a normal CPU environment:

docker build -t oneml-bootcamp:cpu-base -f docker/Dockerfile --build-arg base_image=ubuntu:20.04 .

docker build -t oneml-bootcamp:cpu -f docker/Dockerfile.cpu --build-arg base_image=oneml-bootcamp:cpu-base .For a GPU environment:

docker build -t oneml-bootcamp:gpu-base -f docker/Dockerfile --build-arg base_image=nvidia/cuda:11.5.1-cudnn8-runtime-ubuntu20.04 .

docker build -t oneml-bootcamp:gpu -f docker/Dockerfile.gpu --build-arg base_image=oneml-bootcamp:gpu-base .For an Android environment:

docker build -t oneml-bootcamp:cpu-base -f docker/Dockerfile --build-arg base_image=ubuntu:20.04 .

docker build -t oneml-bootcamp:android -f docker/Dockerfile.android --build-arg base_image=oneml-bootcamp:cpu-base .Apps build

We provide a bash script that will set up the project, prepare the environment and build all the artifacts necessary to run the sample applications.

./build.sh --helpwill provide the description of the build script. Here are some examples:

To build C++ apps for x86_64 target:

./build.sh -t x86_64 -cc --cleanTo build Python apps for aarch64-linux-gnu target:

./build.sh -t aarch64-linux-gnu -py --cleanTo build C# apps for x86_64 target:

./build.sh -t x86_64 -cs --cleanTo build Java apps for x86_64 target:

./build.sh -t x86_64 -jni --cleanTo build Android apps for aarch64-linux-android target:

./build.sh -t aarch64-linux-android -jni --cleanOn x86_64, Cuda-enabled GPU is also supported. To build C++ apps for x86–64-cuda target:

./build.sh -t x86_64-cuda -cc --cleanIf — clean is not specified, the existing oneML artifacts will be used and the old build files will not be deleted.

Windows

We provide support for our library and sample applications for Windows OS as well.

Unfortunately, there is no easy way to use our Dockerfiles in this environment, thus the user has to rely on their own local environment and make sure all the dependencies are installed before proceeding with the build process and running the applications.

Requirements

Only Windows 10/11 64 bit is currently supported.

Dependencies

The following dependencies must be installed in order for the project to build and run successfully:

- Microsoft Visual Studio 16 2019 or newer

- curl to pull some data from the internet

- tar to unpack some archives

- CMake 3.17 or newer (for C++ build only)

- Python 3.6 or newer and pip (for Python build only)

- .NET6 (for C# build only)

- JDK 1.8 (for Java build only)

- Android Studio (for Android build only)

- Golang 1.15 (for Golang build only)

- Powershell, a recent version (for any build, but C++)

Apps build

We provide a batch script that will set up the project, prepare the environment and build all the artifacts necessary to run the sample applications.

build.bat --helpwill provide the description of the build script. Here are some examples:

To build C++ apps for msvc-x64 target:

build.bat -t msvc-x64 -cc --cleanTo build Python apps for msvc-x64 target:

build.bat -t msvc-x64 -py --cleanTo build C# apps for msvc-x64 target:

build.bat -t msvc-x64 -cs --cleanTo build Java apps for msvc-x64 target:

build.bat -t msvc-x64 -jni --cleanIf — clean is not specified, the existing oneML artifacts will be used and the old build files will not be deleted.

Cuda

Currently x86–64-cuda target supports only Cuda 11.x runtime on Linux only. We also plan to support Cuda 10.2 in the future. Moreover, it is built with backward compatibility in mind. x86–64-cuda supports the following Cuda compute capabilities

- 7.5

- 7.0

- 6.1

- 6.0

- 5.2

- 5.0

For compute capabilities later than 7.5, it might not work.

API Reference

Usage

It is possible to find more details about the usage in each programming language specific folder in the apps folder. You can click on the following links:

For all the applications, it is possible to set the LOG level of oneML by settings ONEML_CPP_MIN_LOG_LEVEL environment variable to one of the following values:

- INFO, to log all the information

- WARNING, to log only warnings and more critical information

- ERROR, to log only errors and fatal crashes

- FATAL, to only log fatal crashes

Use cases

With a wide variety of face-related and ALPR-related applications

1. Face or face masks detection: detecting numbers of faces in an image by identifying confidence score, bounding box, landmarks, and poses of each face

2. Face embedding: transforming an image into an embedding which is a list of numbers

3. Face ID: registering a new identity into a database, validating images or embeddings, and predicting an identity based on identities in the database by calculating nearest identity distance, combined identity distance, and true/false identifiability of the image

4. PAD (Person Attack Detection): classifying a spoof probability of the image

5. Challenge response: performing liveness detection on images or video by detecting blinks

6. Vehicle analysis application: detecting vehicles and numbers of vehicles using confidence score and bounding box, classifying vehicle, detecting make or model, as well as detecting license plates

7. Utils: providing an additional set of utilities such as reading an image from file and cropping and aligning a face in an image

oneML Supported Components

On the customer side, there is no prerequisite apart from having a compatible deployment environment with at least one combination of supported programming languages, operating systems, and target hardware and architectures. If the environment has high computational power, the SDK will run faster but it won’t affect the accuracy.

NOTE:

A licensing system is integrated into oneML providing features and usage control such as license keys, online or offline activation, expiration date and trial.

For those who are interested to try it out for free for a limited time, let’s explore the public repository via this link below.

Contact us for more information: https://www.sertiscorp.com/one-ml